In this edition of Who’s who in the Zoo, meet Ameenat Lola Solebo who leads Eyes on Eyes ; a Zooniverse project that aims to improve how we monitor children with a blinding eye disorder.

Who: Ameenat Lola Solebo, Clinician Scientist (Paediatric Ophthalmology / Epidemiology & Health Data Science)

Location: UCL GOS Institute of Child Health and Great Ormond Street Hospital

Zooniverse project: Eyes on Eyes

What is your research about?

We’re asking Zooniverse volunteers to label eye images of children with or at risk of a blinding disease called uveitis. Early detection of uveitis means less chance of blindness, but it is becoming increasingly difficult for children to access the specialised experts they need to detect uveitis at an early stage (before the uveitis has caused damage in side the eye). New ‘OCT’ (eye cameras) may provide detailed enough images of the eye to allow even non specialists to detect uveitis at the early stages. Our research studies develop and evaluate OCT methods for uveitis detection and monitoring in children, and during these studies we collect a lot of data from children’s eyes – sometimes several hundred scans in different positions just from one child. We are hoping that we don’t need to keep on collecting this many images in the long run, but we have to know where and how best to look for problems.

How do Zooniverse volunteers contribute to your research?

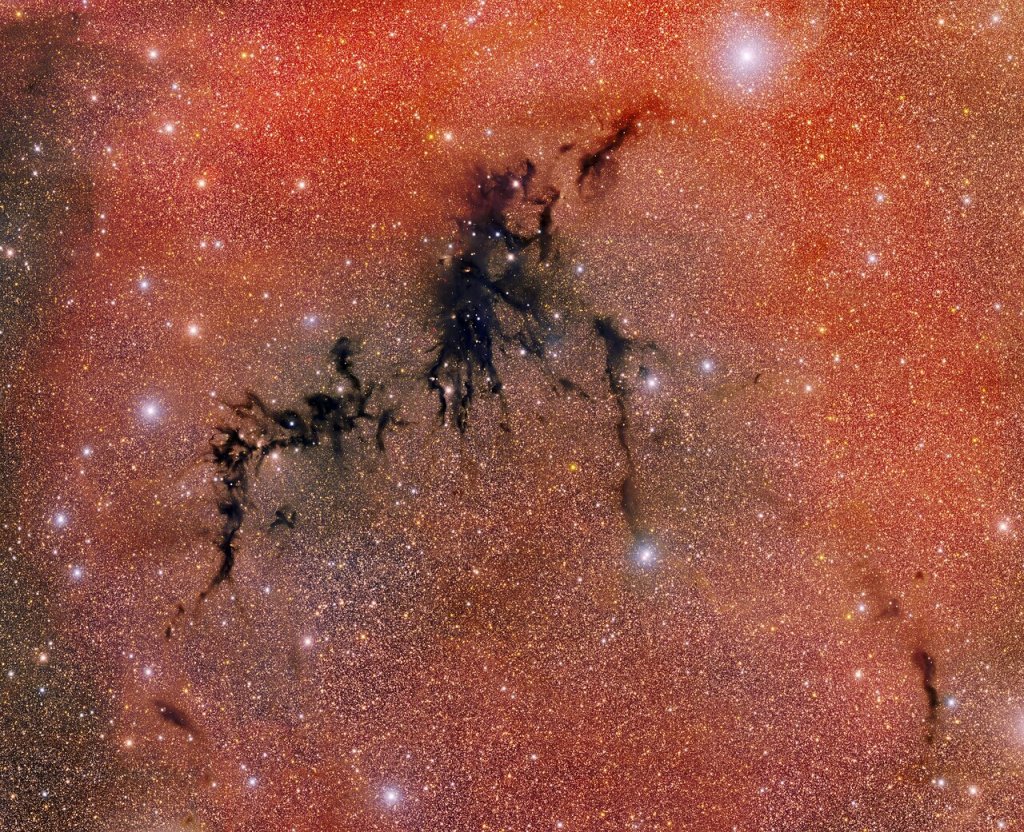

Zooniverse volunteers are asked to label scans in different ways. They can tell us what they think of the quality of an individual scan – is it good enough to be useful? They can point out which features of the scan are making it poorer quality so that we can judge how useful it might be. They can draw regions of interest on the scan, helping to focus attention. They can also pick up the signs of uveitis – inflammatory cells floating around in the usually dark space inside the eye, looking like bright stars in a dark sky. They can tell us if they can see cells, how many cells they can see, and they can locate each cell for us. The quality judgements submitted by the volunteers have compared favourably to expert judgement, which is great. We have since developed a quality assessment algorithm based on labels from the Zooniverse volunteers. We are now looking to just how accurate the volunteer assessments of the images are compared to the clinical diagnosis of the child.

What’s a surprising fact about your research field?

Uveitis is often autoimmune, meaning your body turns against the delicate tissues in your eye — especially the uvea, a highly vascular layer that includes the iris. It’s like friendly fire… which is such an awful term, isn’t it?

What first got you interested in research?

I was tired of answering “we don’t know” when parents asked us questions about their child’s eye disease.

What’s something people might not expect about your job or daily routine?

Someone asked me how I put back the eye after doing eye surgery – ophthalmic surgeons do not, I repeat do not remove the eye from patients to operate on them! Also – I think that people may be surprised about how beautiful the eye looks when viewed at high mag. Ophthalmologists use a microscope called a slit lamp to look at and into a patient’s eye. The globe is such a fragile, well constructed, almost mystical body part, and vision is practically magic!

Outside of work, what do you enjoy doing?

I recently started karate. I am by far the oldest white belt and I am really loving making the KIAI! noises.

What are you favourite citizen science projects?

The Etch A Cell projects, because I learnt so much how to run my own project from that team and Black hole hunters, because they are great at describing what they have done with volunteer data.

What guidance would you give to other researchers considering creating a citizen research project?

Do it! And do it on Zooniverse, because the community is super engaged and the back of house team are so supportive. Stay active on talk boards to engage volunteers. And test, refine, test, refine your project until you start seeing it in your sleep.

And finally…

Thank you to all the volunteers who have been helping us!