Our research group at Syracuse University spends a lot of time trying to understand how participants master tasks given the constraints they face. We conducted two studies as a part of a U.S. National Science Foundation grant to build Gravity Spy, one of the most advanced citizen science projects to date (see: www.gravityspy.org). We started with two questions: 1) How best to guide participants through learning many classes? 2) What type of interactions do participants have that lead to enhanced learning? Our goal was to improve experiences on the project. Like most internet sites, Zooniverse periodically tries different versions of the site or task and monitors how participants do.

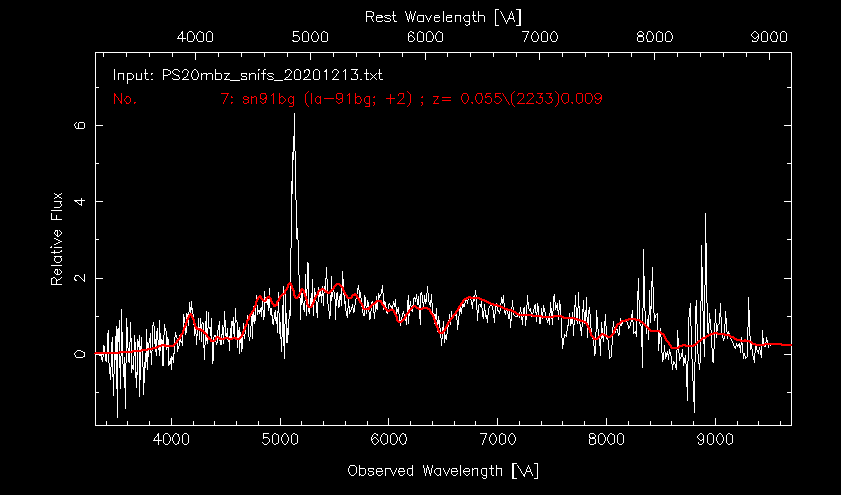

We conducted two Gravity Spy experiments (the results were published via open access: article 1 and article 2). Like in other Zooniverse projects, Gravity Spy participants supply judgments to an image subject, noting which class the subject belongs to. Participants also have access to learning resources such as the field guide, about pages, and ‘Talk’ discussion forums. In Gravity Spy, we ask participants to review spectrograms to determine whether a glitch (i.e., noise) is present. The participant classifications are supplied to astrophysicists who are searching for gravitational waves. The classifications help isolate glitches from valid gravitational-wave signals.

Gravity Spy combines human and machine learning components to help astrophysicists search for gravitational waves. Gravity Spy uses machine learning algorithms to determine the likelihood of a glitch belonging to a particular glitch class (currently, 22 known glitches appear in the data stream); the output is a percentage likelihood of being in each category.

Figure 1. The classification interface for a high level in Gravity Spy

Gradual introduction to tasks increases accuracy and retention.

The literature on human learning is unclear about how many classes people can learn at once. Showing too many glitch class options might discourage participants since the task may seem too daunting, so we wanted to develop training while also allowing them to make useful contributions. We decided to implement and test leveling, where participants can gradually learn to identify glitch classes across different workflows. In Level 1, participants see only two glitch class options; in Level 2, they see 6; in Level 3, they see 10, and in Level 4, 22 glitch class options. We also used the machine learning results to route more straightforward glitches to lower levels and the more ambiguous subjects to higher workflows. So participants in Level 1 only saw subjects that the algorithm was confident a participant could categorize accurately. However, when the percentage likelihood was low (meaning the classification task became more difficult), we routed these to higher workflows.

We experimented to determine what this gradual introduction into the classification task meant for participants. One group of participants were funneled through the training described above (we called it machine learning guided training or MLGT); another group of participants was given all 22 classes at once. Here’s what we found:

- Participants who completed MLGT were more accurate than participants who did not receive the MLGT (90% vs. 54%).

- Participants who completed MLGT executed more classifications than participants who did not receive the MLGT (228 vs. 121 classifications).

- Participants who completed MLGT had more sessions than participants who did not receive the MLGT (2.5 vs. 2 sessions).

The usefulness of resources changes as tasks become more challenging

Anecdotally, we know that participants contribute valuable information on the discussion boards, which is beneficial for learning. We were curious about how participants navigated all the information resources on the site and whether those information resources improved people’s classification accuracy. Our goal was to (1) identify learning engagements, and (2) determine if those learning engagements led to increased accuracy. We turned on analytics data and mined these data to determine which types of interactions (e.g., posting comments, opening the field guide, creating collections) improved accuracy. We conducted a quasi-experiment at each workflow, isolating the gold standard data (i.e., the subjects with a known glitch class). We looked at each occasion a participant classified a gold standard subject incorrectly and determined what types of actions a participant made between that classification and the next classification of the same glitch class. We mined the analytics data to see what activities existed between Classification A and Classification B. We did some statistical analysis, and the results were astounding and cool. Here’s what we found:

- In Level 1, no learning actions were significant. We suspect this is because the tutorial and other materials created by the science team are comprehensive, and most people are accurate in workflow 1 (~97%).

- In Level 2 and Level 3, collections, favoriting subjects, and the search function was most valuable for improving accuracy. Here, participants’ agency seems to help to learn. Anecdotally, we know people collect and learn from ambiguous subjects.

- In Level 4, we found that actions such as posting comments and, viewing the collections created by other participants were most valuable for improving accuracy. Since the most challenging glitches are administered in workflow 4, participants seek feedback from others.

The one-line summary of this experiment is that when tasks are more straightforward, learning resources created by the science teams are most valuable; however, as tasks become more challenging, learning is better supported by the community of participants through the discussion boards and collections. Our next challenge is making these types of learning engagements visible to participants.

Note: We would like to thank the thousands of Gravity Spy participants without whom this research would not be possible. This work was supported by a U.S. National Science Foundation grant No. 1713424 and 1547880. Check out Citizen Science Research at Syracuse for more about our work.