What makes one citizen science project flourish while another flounders? Is there a foolproof recipe for success when creating a citizen science project? As part of building and helping others build projects that ask the public to contribute to diverse research goals, we think and talk a lot about success and failure at the Zooniverse.

But while our individual definitions of success overlap quite a bit, we don’t all agree on which factors are the most important. Our opinions are informed by years of experience, yet before this year we hadn’t tried incorporating our data into a comprehensive set of measures — or “metrics”. So when our collaborators in the VOLCROWE project proposed that we try to quantify success in the Zooniverse using a wide variety of measures, we jumped at the chance. We knew it would be a challenge, and we also knew we probably wouldn’t be able to find a single set of metrics suitable for all projects, but we figured we should at least try to write down one possible approach and note its strengths and weaknesses so that others might be able to build on our ideas.

The results are in Cox et al. (2015):

In this study, we only considered projects that were at least 18 months old, so that all the projects considered had a minimum amount of time to analyze their data and publish their work. For a few of our earliest projects, we weren’t able to source the raw classification data and/or get the public-engagement data we needed, so those projects were excluded from the analysis. We ended up with a case study of 17 projects in all (plus the Andromeda Project, about which more in part 2).

The full paper is available here (or here if you don’t have academic institutional access), and the purpose of these blog posts is to summarize the method and discuss the implications and limitations of the results.

Methods

The overall goal of the study was to combine different quantitative measures of Zooniverse projects along 2 axes: “Contribution To Science” and “Public Engagement”.

A fair bit of ink has been spilled in the academic literature about the key outcomes that point to success for a citizen science project. This work brought many of those together (and adapted some of them), combining similar measures into categories. These included some basic measures like:

- number of classifications;

- number of volunteers;

- number of posts on the project forum/Talk;

- number of posts by research team members on the forum/Talk;

- number of posts on blogs and social media; and

- number of publications.

In order to help compare different projects, our study controlled each of these measures for either the project age (time period between the start of the project and now) or duration (the number of days the project was actively collecting classifications), depending on which was most appropriate.

There were also more advanced measures, such as:

- the classification Gini coefficient, which measures how the workload in each project is distributed among the volunteers;

- the fraction of volunteers who only completed the tutorial and never actually classified;

- the count of research papers written with project volunteers as named co-authors; and

- the amount of time someone would have to work as a full-time employee to produce the project’s classifications.

Additionally, we collected some measures that we didn’t end up using, such as the fraction of forum/Talk threads that were conversations as opposed to single comments, the typical length of forum/Talk posts, and various measures of the popularity of project blogs and social media accounts. While we’d like to include these in a future analysis that allows for additional nuances, the idea behind this study was a clean aggregation and combination of relatively straightforward quantitative measures.

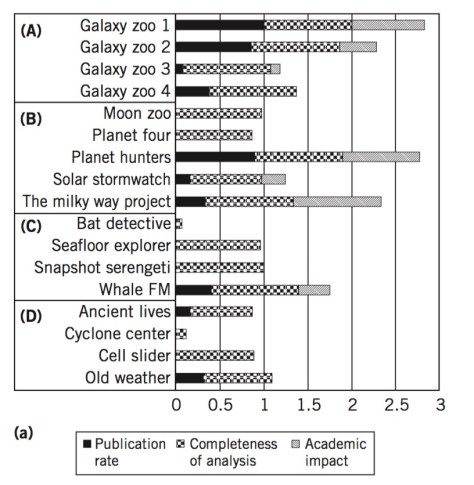

Once we’d collected and normalized all our data across projects, the data was combined by category into quantitative measures for each project. Each of the 4 categories (called performance indicators in the paper) was made up of 3 individual measures. Here’s what the “Data Value” performance indicator looks like for the projects:

A couple of notes here:

- The ABCD categories are: Galaxy Zoo, Other Astronomy, Ecology, and Other.

- The reference to Old Weather throughout this study is actually to the latest Old Weather project, which began collecting classifications in mid-2012. I’ll discuss Old Weather in much greater detail in part 2.

There are 4 charts like this, 2 for “Contribution to Science” and 2 for “Public Engagement”. In the interest of further distilling things down into a “simple” measure of success, the study combines both charts for each broad measure into 1 number, so that “Public Engagement” and “Contribution to Science” can be plotted against one another:

This is the Zooniverse Project Success Matrix from Cox et al. (2015). Note that the drawn axes represent average values, not zero, and anyway the numbers themselves are more or less meaningless because measures of different units were combined and everything has been normalized across projects.

In the next post, I’ll discuss some of the implications and limitations of this way of measuring project success.

2 thoughts on “Measuring Success in Citizen Science Projects, Part 1: Methods”